DataOps Implementation Guide April 18, 2022.

DataOps Implementation Guide

In every organization, multiple data-centric projects are manifesting in the form of a data warehouse, data lakes, big data, cloud data migration, BI reporting, data analytics and machine learning. While the budget and timeline are shrinking, the size as well as the complexity of the data projects and their data quality requirements are increasing.

Most software projects have adopted the DevOps principles, but the data integration and migration projects in many ways are still living under the rock. It has been consistently observed that data-centric development and management are lacking the rigors and the discipline required to execute and manage these large and complex data systems. With the advent of Big data and Cloud technology, this has become a huge problem.

This paper discusses the adoption of DataOps methodologies for the development and production phases of the data pipeline implementations. The idea is to have an integrated DataOps framework to improve the success of the project and improve the data quality and reliability of the data systems. We further analyse some of the bottlenecks such as organizational culture and lack of data test automation. The effect of rigid wall between development and operations teams and the impact on data systems. Ultimately, we are proposing the DataOps framework as a solution to improve both the delivery of the data project and data quality.

The Data Problem and the DataOps Solution

Data-centric projects are becoming more prominent in size and complexity, making both project execution and data operations much more difficult.

From Development Perspective:

- Time to Market: The time required for data projects is increasing. Many cloud data migration projects have multi-year timelines. Data teams are underestimating the complexity of the data projects resulting in last moment surprises as well as cost overruns. Projects are delayed due to testing issues that are discovered too late in the project lifecycle.

- Development Cost: The manual and repetitive tasks are still not automated. And the manual effort will be time-consuming, cost-intensive, and sometimes impossible. For example, manually testing big data volumes is impossible.

From Operations Perspective:

- User Dissatisfaction: Poor data quality is often an afterthought, resulting in high user dissatisfaction rates.

- High Cost of Production Fixes: Lack of test automation has resulted in lots of refactoring or patchwork in production.

- Compliance and Data Governance: As data has become crucial for business, data quality and compliance around data have become extremely strict. Many compliance regulations such as GDPR, BCBS-239, SOX, Solvency II and CCPA require specific policies and security around data.

The Application software world has resolved many of the issues by implementing the DevOps framework. It is time for the data world to borrow some of these ideas and adapt them to the data world.

What is DataOps?

The term “DevOps” was coined in 2009 by Patrick Debois. He noticed that many of the problems associated with software development and quality were related to partitioning of development and operations departments and lack of test automation. His idea was to integrate the development teams with the operations teams. Further, he proposed to automate the CICD pipeline, testing and production monitoring.

DataOps is about bringing many DevOps ideas such as Agile Development, CICD, Continuous Testing and white box monitoring methodologies, Integration of development and operations teams, and the addition of some data-specific considerations.

How to Implement DataOps?

To implement DataOps the organization must focus on three core ideas:

|

DataOps |

= 1. Culture + 2. Tools for Automation + 3. Processes |

Step by Step Guide:

- Un-siloed development and operations teams

- Adopt agile & test-driven development (TDD)

- Link QA requirements with physical tests

- Treat everything as code & use code repository

- Create centralized QA test repository

- Create data repository/golden copy

- Create regression packs for testing

- Build CICD pipeline for automated build and deployment

- Implement white box monitoring for data quality in production

- Establish data quality governance

- Establish data quality issue resolution workflows

- Establish data quality dashboards

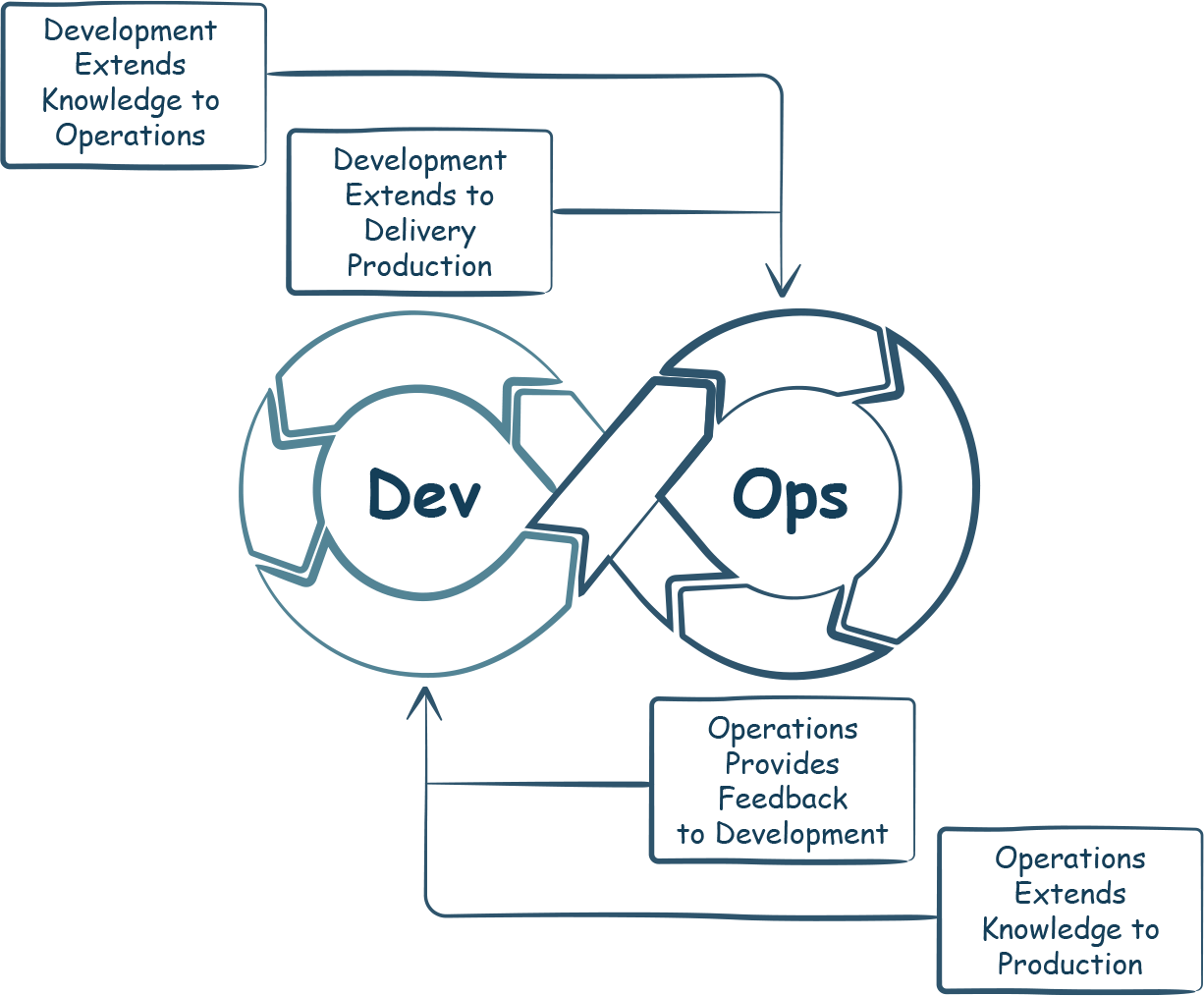

1. Un-Siloed Development and Operations Teams

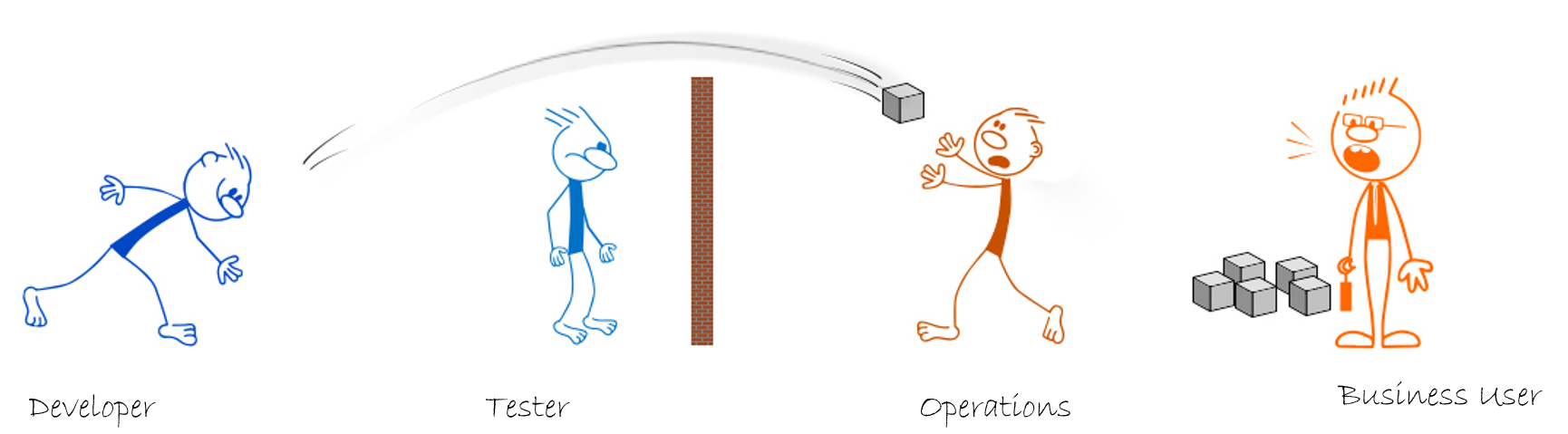

The data teams are usually divided into the Development and Operations teams. Their roles also define the boundaries of their work responsibility. Developers, testers on one side of the wall and business users, operations teams, data stewards are on the other side.

In almost all data integration projects, development teams develop and test ETL processes along with reports. Then they try to throw the code across the wall to the operations teams.

Operations teams never get involved in development. However, when the data issues start appearing in the production, business users become unhappy. The business users begin pointing fingers at operations teams and, they in turn, point fingers at QA team. The QA group usually pushes the blame on the development team.

| During Development of ETL and Release… |

|

| …and when data defects are found in production after release! |

|

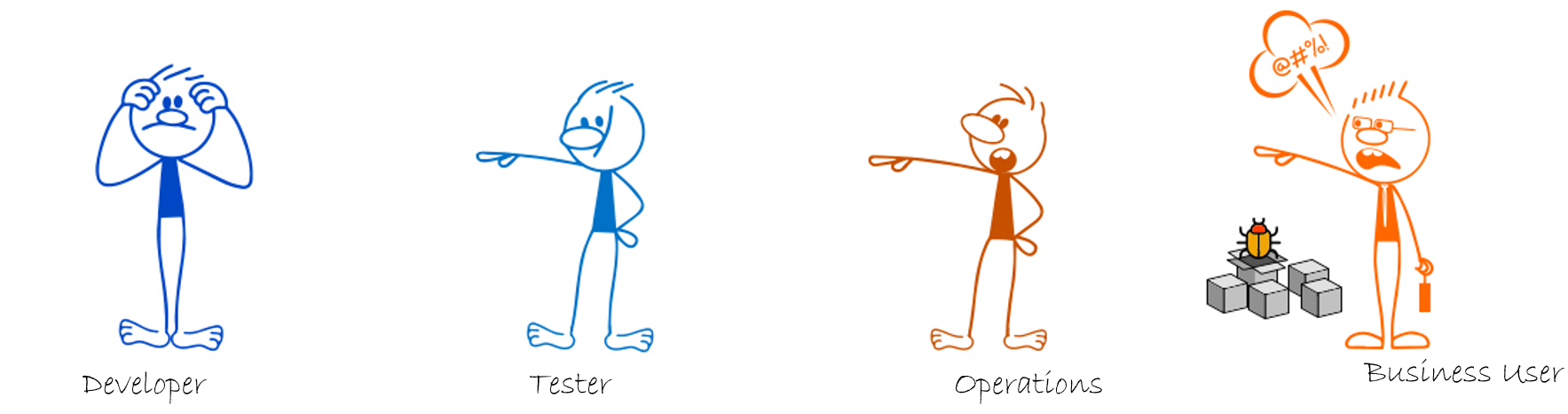

The root cause of this problem is the culture of the organization and teams. DataOps is about removing this wall of responsibilities.

The development team should be made responsible for data quality in production environments. Ex. Whitebox Monitoring must be introduced so that some of the data quality tests are passed on from development to production as part of release. (read more in Whitebox Monitoring section)

Business users cannot suddenly come and start complaining about data in productions. Instead, the operations team and business users should identify provide the development teams with Data Audit Rules for Validation and Reconciliation of data as part of the requirements.

These small changes will ensure the developer involves the business users and data stewards right from the beginning of the project. DataOps adoption results in a transformation of organizational culture, automating every aspect of SDLC from test automation to production data monitoring. Beyond that, DataOps results in a culture shift, which removes the barriers between development and operations teams. There is no more throwing over the wall and running away from the responsibilities.

| DataOps Transforms the Culture of the Organization. |

|

| With DataOps everyone is on the same side of the wall! |

2. Adopt Agile & Test-Driven Development (TDD)

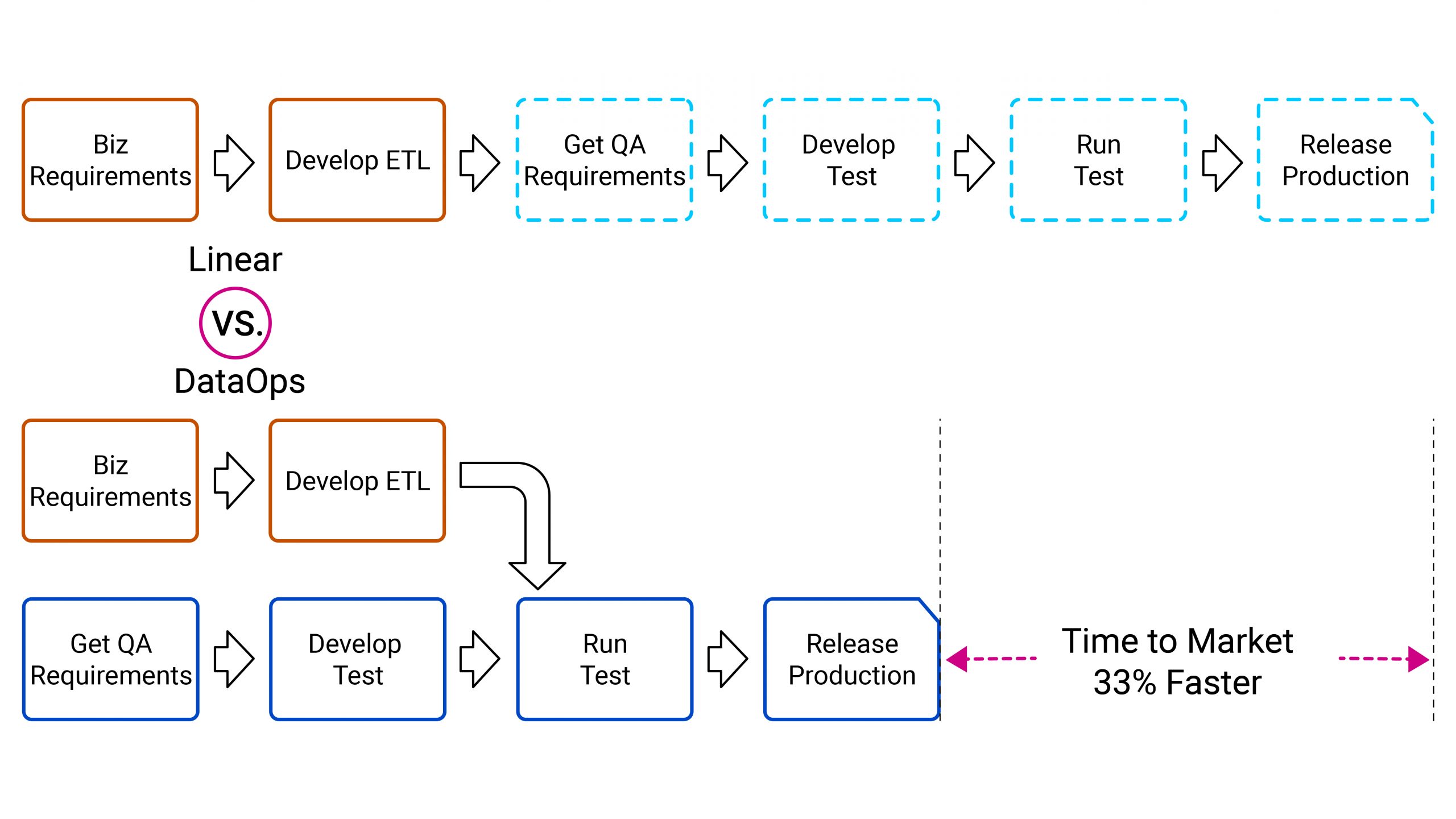

While data transformation rules are provided to developers, the business usually do not share the testing requirement. Developers and testers work sequentially. Only after development completion, testers work on testing requirements, test creations and then the focus shifts to testing. This is now late in the process.

|

Instead of sequential steps, developers can create the design and develop the tests parallel to the development of the data pipeline. By using Non-Linear timelines, Time-to-Market is now 33% faster.

3. Link QA Requirements with Physical Tests

While the iCEDQ platform is a repository for holding physical tests, it also has capabilities to link iCEDQ tests with requirements and test management systems like HPALM, JIRA, etc. This allows organizations to enforce a minimum number of tests required for approval. For example, a complex ETL process should have at least seven tests, medium complexity should have five and simple processes should have two.

|

When the tests are executed, the results can be reported automatically to the requirement and test case management system.

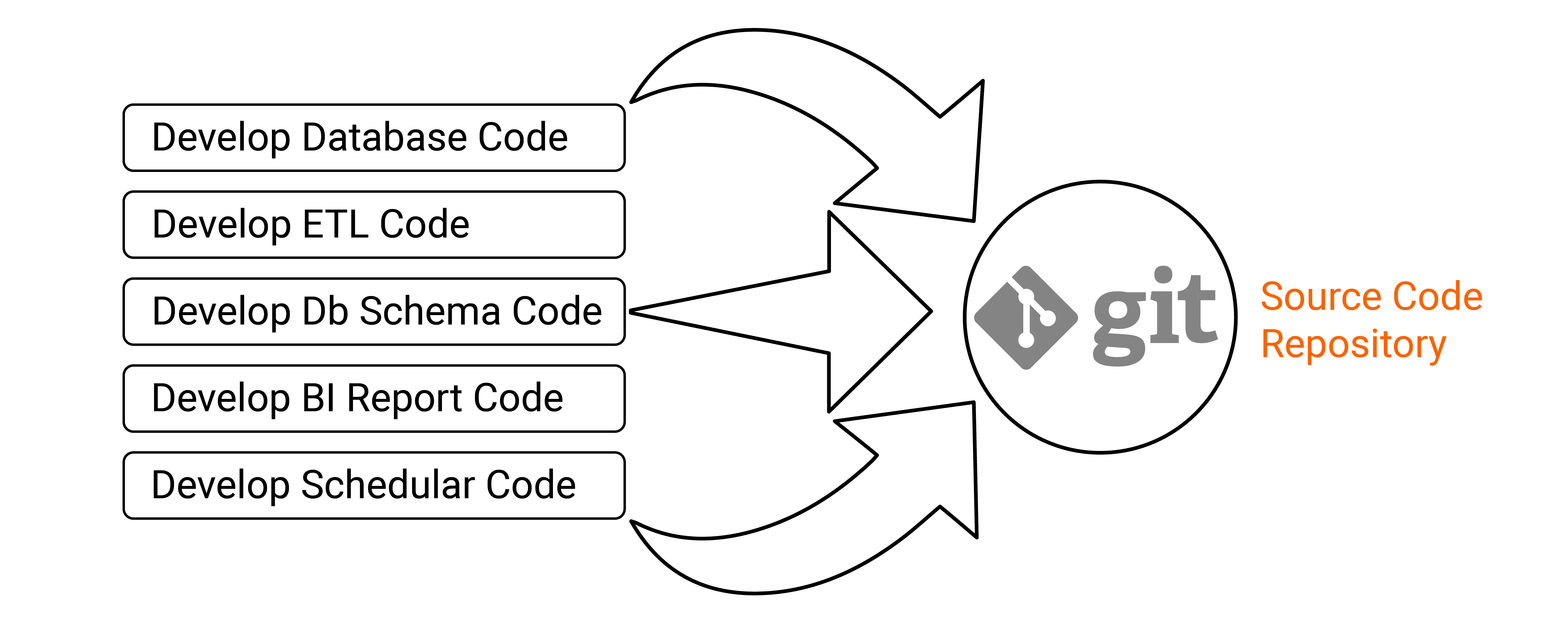

4. Treat Everything as Code & Use Code Repository

DataOps requires a high level of automation of development and engineering. In any data project, the following items are produced:

- ETL code: Data transformation and loading processes.

- Database Objects: Procedures, functions, tables, indexes, etc.

- Configuration data: It is populated in the database to run the data processes. It should be in the form of SQL scripts.

- Initialization data: It is required to bring the system to the current state. It should be in the form of SQL scripts.

- Reference data: The data required for reference. Example customer types, product types, etc. It should be in the form of SQL scripts

- Shell Scripts

- Scheduling

- Reports and Dashboard

- Testing and data monitoring rules.

Once everything (ETL, Database procedures, schemas, schedules, and reports, etc.) is treated as code, then the question is, where is the code stored for deployment? There are industry-standard solutions like Git repositories, where the code can be committed and deployed as required. There could be some exceptions for data. For example, the initialization data might be huge, and then it can be stored in specialized database repositories that can be used as a source for deployment.

|

Store the code in a repository. The main requirement is to ensure the code is accessible to some automation tool. The code in data-centric projects is a combination of ETL code, BI report code, scheduler/orchestration code, database procedures, database schema (DDL) and some DML. Both ETL and reports code must be captured and stored in some repository. |

|

| Store the code in a repository. The main requirement is to ensure the code is accessible to some automation tool. The code in data-centric projects is a combination of ETL code, BI report code, scheduler/orchestration code, database procedures, database schema (DDL) and some DML. Both ETL and reports code must be captured and stored in some repository. |

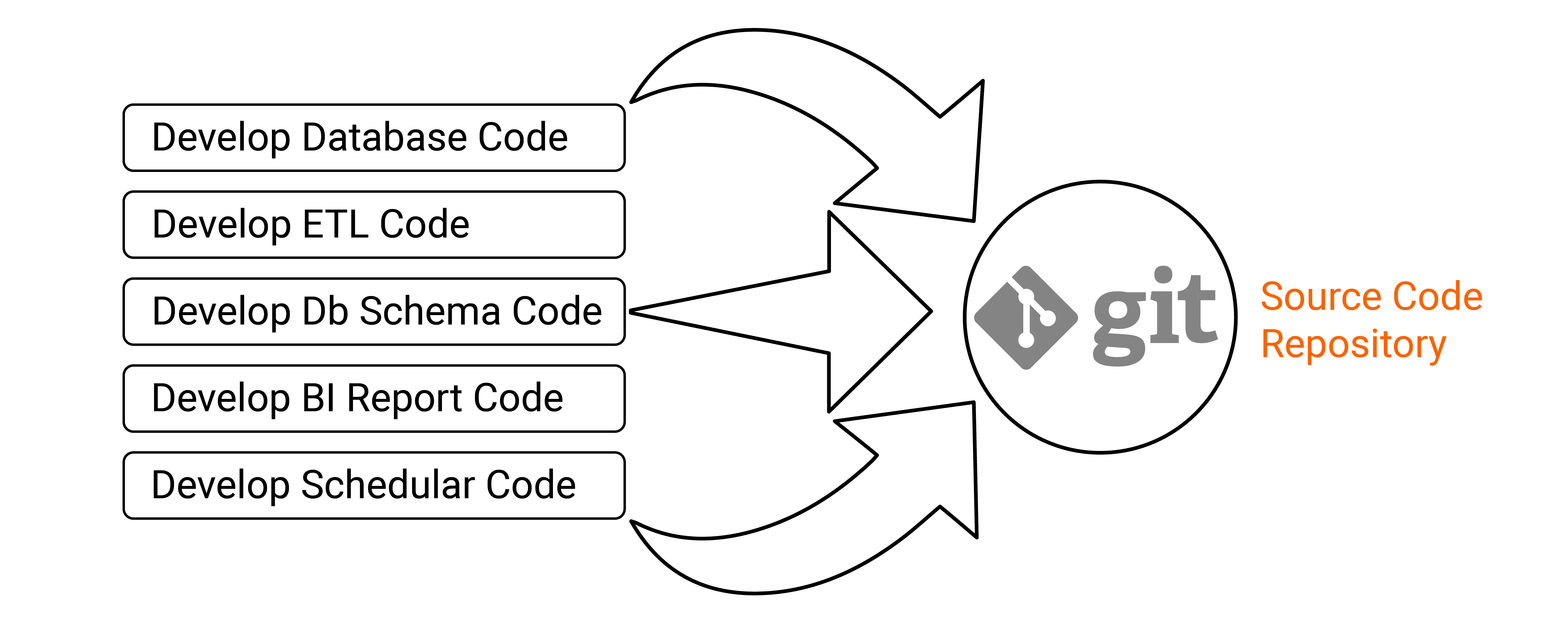

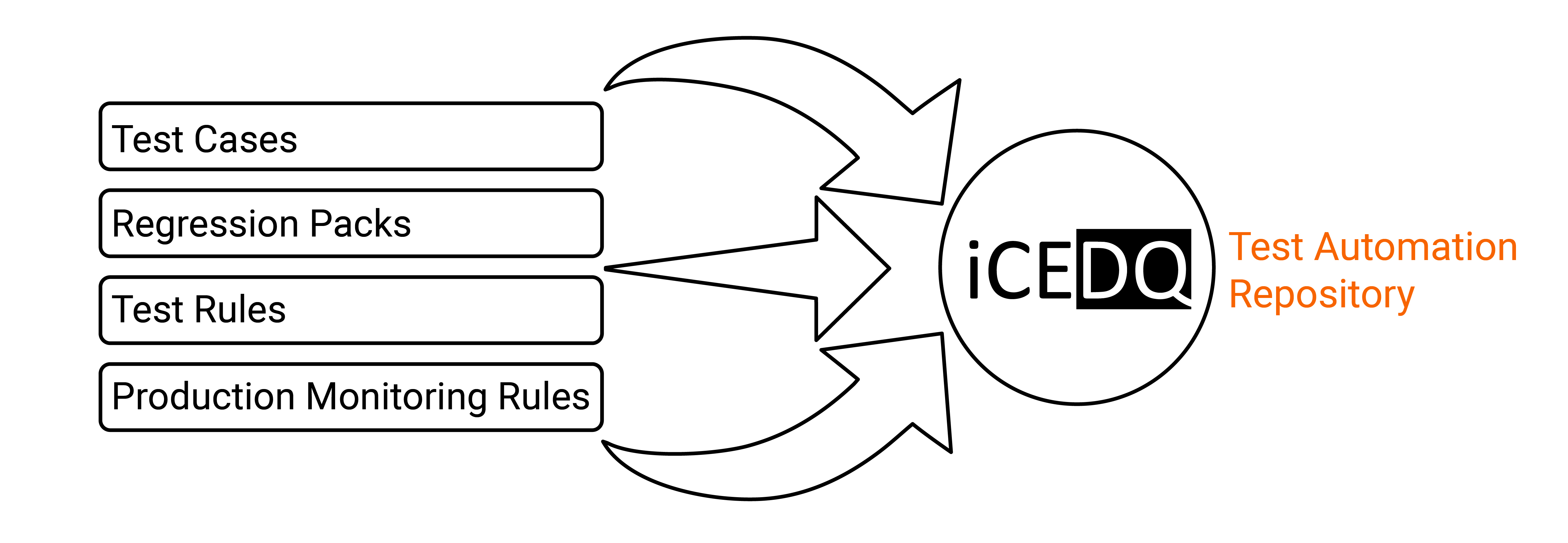

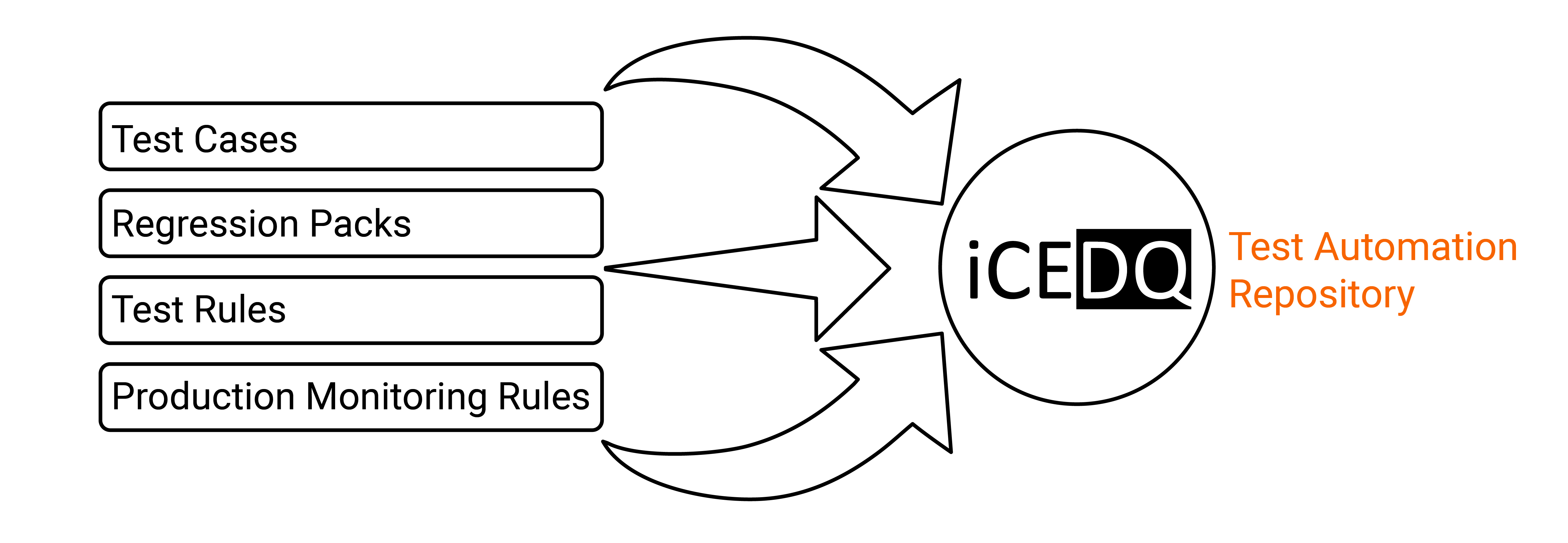

5. Create Centralized QA Test Repository

The test automation repository should consist of test cases, regression packs, test automation scripts and production data monitoring rules. This test can be recalled on- demand by a CICD script.

|

A centralized repository will ensure all testing and monitoring rules are stored and accessible in the future. The testing approach for Data Process (ETL) and Report is entirely different from the software application’s testing approach. The ETL process is executed first, and then the data is compared from the original to certify the ETL process. This is because the quality of ETL is determined by comparing expected vs. actual data. The actual data is the data added or updated by the ETL process and expected is the input data plus the data transformation rule(s). |

|

| A centralized repository will ensure all testing and monitoring rules are stored and accessible in the future. The testing approach for Data Process (ETL) and Report is entirely different from the software application’s testing approach. The ETL process is executed first, and then the data is compared from the original to certify the ETL process. This is because the quality of ETL is determined by comparing expected vs. actual data. The actual data is the data added or updated by the ETL process and expected is the input data plus the data transformation rule(s). |

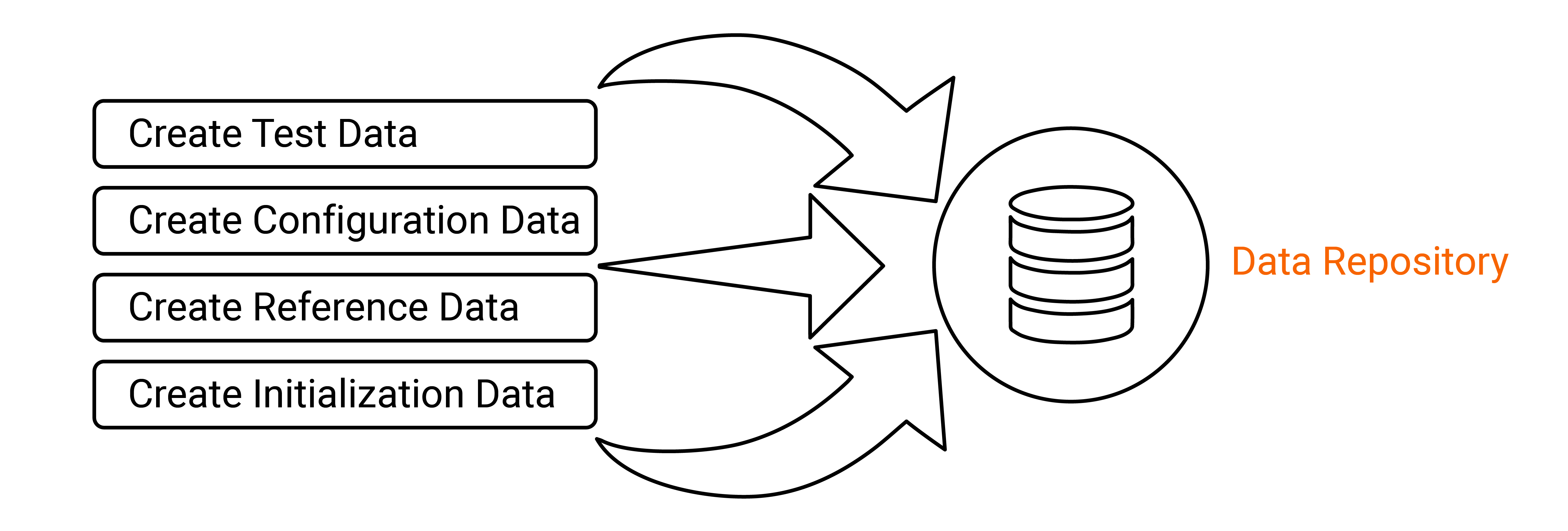

6. Create Data Repository/Golden Copy

Whenever new data processes are added or updated, some data must usually be prepopulated into the database, e.g., it could be reference data, test data, or configuration data. Configuration data could be dates required for incremental loads.

|

Configuration data, Reference data, and Test data are not managed. A data project requires test data; however, test data is not created in advance nor linked to the test cases. Reference data is required to initialize the database. For example, default values for customer types must be created in advance so it does not have any data source. If the reference data is missing, none of the ETL processes will work. Configuration tables data must also be prepopulated. Some data is used for incremental or delta processing and some for populating metadata about the processes. |

|

| Configuration data, Reference data, and Test data are not managed. A data project requires test data; however, test data is not created in advance nor linked to the test cases. Reference data is required to initialize the database. For example, default values for customer types must be created in advance so it does not have any data source. If the reference data is missing, none of the ETL processes will work. Configuration tables data must also be prepopulated. Some data is used for incremental or delta processing and some for populating metadata about the processes. |

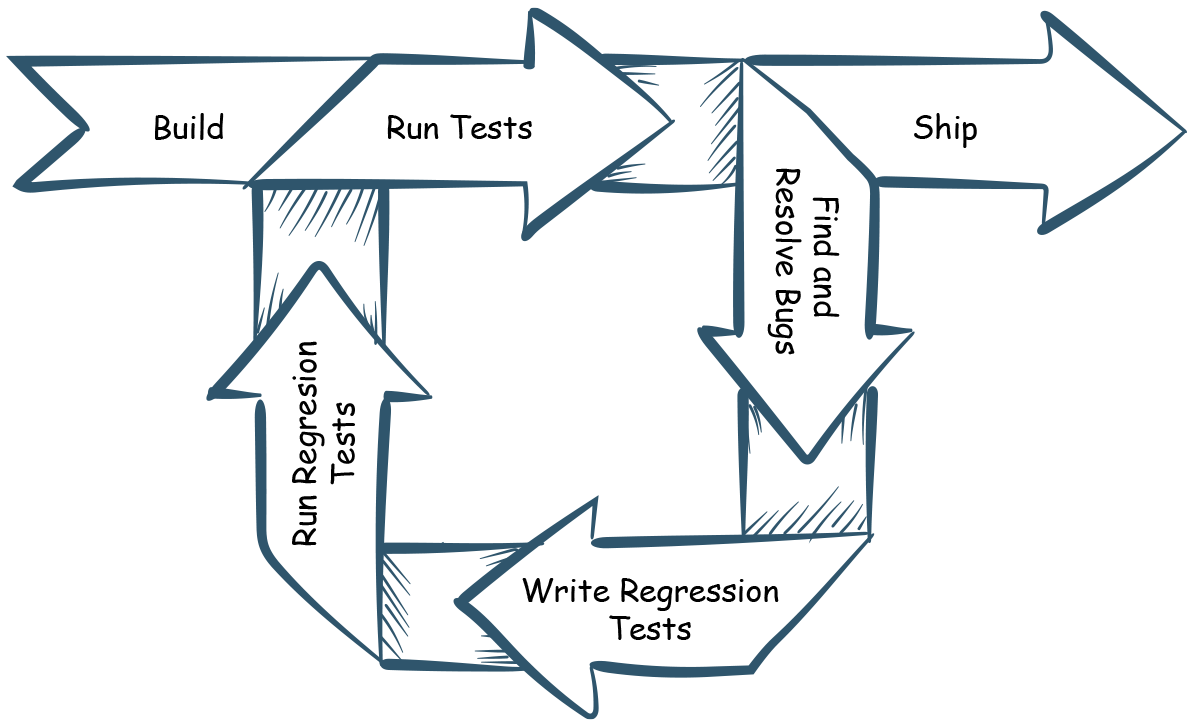

7. Create Regression Packs for Testing

After the system goes live, if any data issues are found, the development team must go back and fix the code. There can be changes in the business requirements that might result in changes. Even adding new features will impact the existing system. These frequent changes create a significant challenge to complete regression testing since previous/older test cases must be considered to test the ETL flow.

|

Creating a data test regression pack solves this problem. You essentially create a test pack containing new tests and import some of the old tests to ensure that new changes do not break anything. If they have not used a test automation tool like iCEDQ that stores the rules in a repository, nothing will exist. The tests can be recalled anytime in the future whenever changes are made in the code, data, or reports.

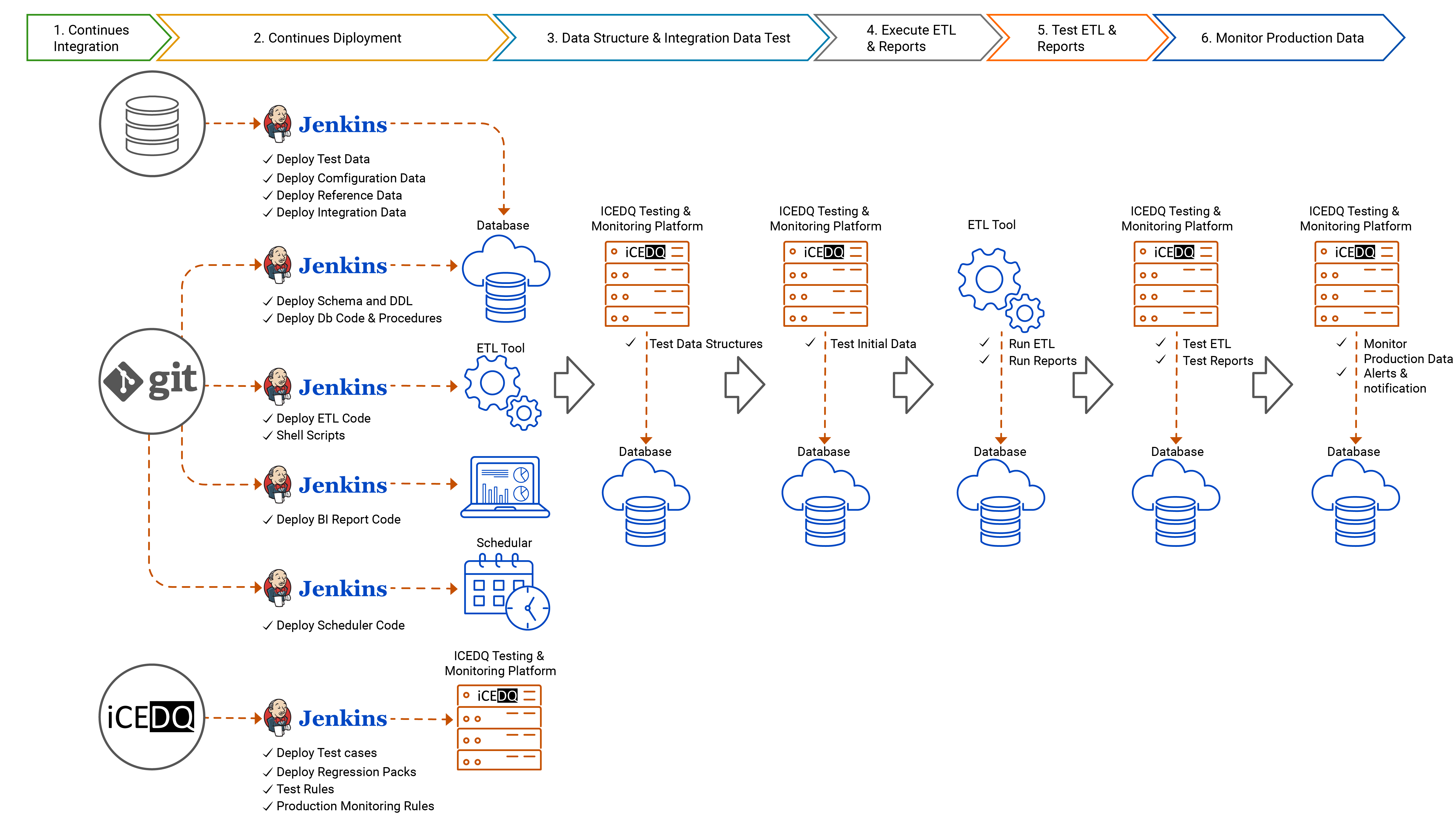

8. Build CICD pipeline for Automated Build and Deployment

Since most ETL and report developers use GUI based tools to create their processes or reports, the code is not visible. The ETL tool stores the code directly into its repository. This creates a false narrative that there is no code, hence no need to manage, version, or integrate. However, the majority of ETL tools now provide APIs to import and deploy the system into different environments, the functionality of which is often ignored. Once code, test, and data repositories are built, use tools like Jenkins to build and deploy code.

|

- Continuous Integration – In the previous section, it is clear that all code must be stored in some repository and available for DevOps automation. With code, it becomes easy to manage various code branches and versions. Based on the release plan, code can be selected and integrated with the help of CICD tools like Jenkins.

- Continuous Deployment – The integrated code is pulled by Jenkins and deployed with the API of command-line import and export utilities. Depending on the code type, the code is pushed to a Database, ETL, Reporting platform. Further, CICD tools will also deploy initialization data in the database. This will create the necessary QA or production environment, which is ready for further execution.

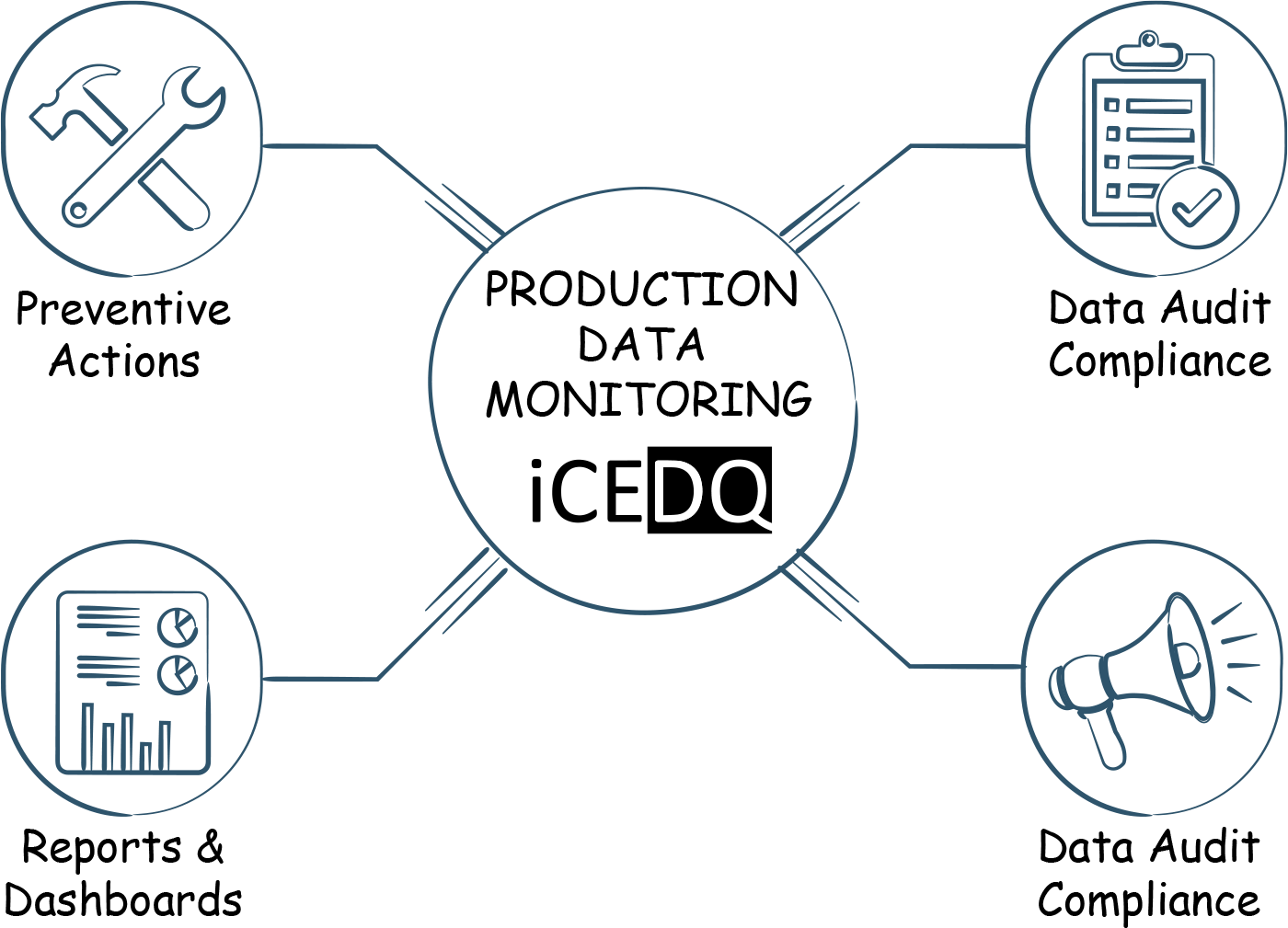

9. Implement White Box Monitoring for Data Quality in Production

Usually, once data testing is done, the tests are never used again. This changes with DataOps because rules are reused in production for data monitoring. This is a direct result of extending the development team’s effort and thought process in productions. Until DataOps, the operations only used to monitor the ETL jobs for success or failure on the error at the schedular level but not on data issues. Schedular based failure only provides for Black Box Monitoring.

|

Data quality governance should not be an afterthought. A special requirement for DataOps is for developers integrates hooks into their data process to monitor the data quality in production. When system goes live into production, operations team will get many of the iCEDQ rules as a jumpstart for monitoring and certifying production data. Of course, the, DataOps team must be selective while choosing the relevant audit from the pool of available test cases.

These audit rules provided by the development team will cover many of the White Box Monitoring cases, but not all. The operations team involving business users and data stewards still need to establish complete data quality governance in production.

Integrate Data Audit Rules in operational Dataflows: DataOps requires automation data quality monitoring as an S.O.P. (Standard Operating Procedure). If there are data monitoring rules for data quality in production, it does not make sense to run them manually. The Data Quality Rules in iCEDQ can be integrated as part of the scheduled job orchestration.

|

Blocker Rules: Some iCEDQ data quality rules will be embedded in the critical path of the process. And if the audit process fails, then the production jobs stop. Critical Rules: These iCEDQ rules also run in line with the ETL jobs but only raise alerts, and the process does not stop. Warning Rules: These types of iCEDQ rules run parallel to the ETL jobs and provide alerts for the operations and data stewards to check the data. |

|

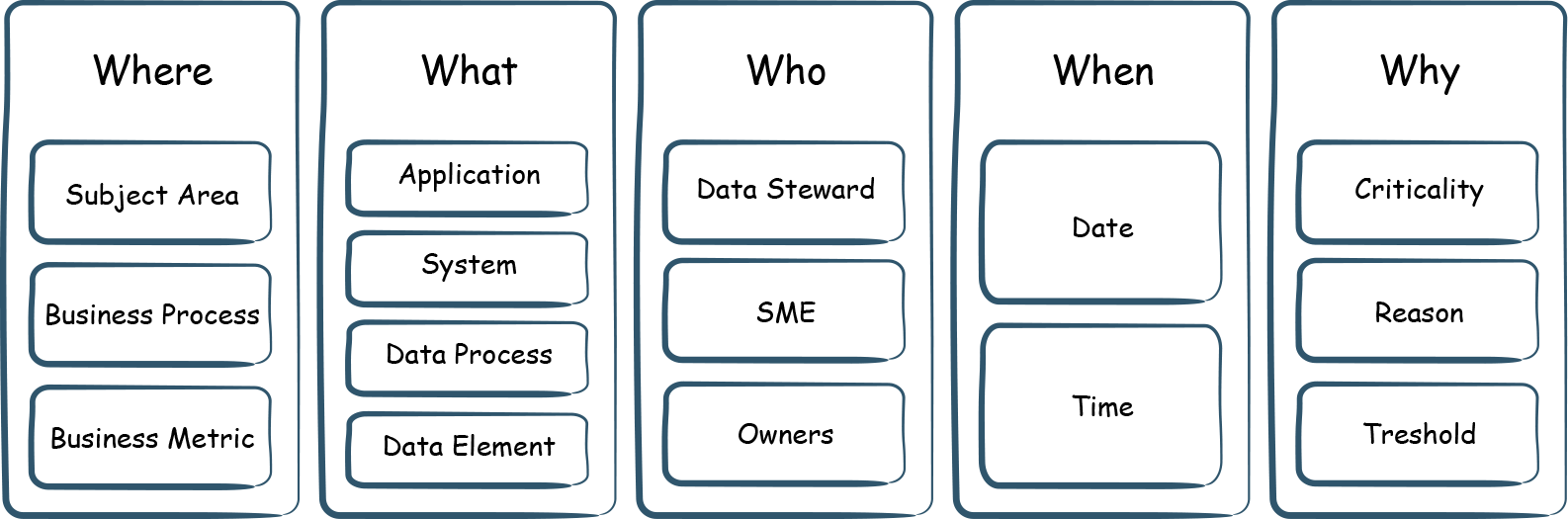

10. Establish Data Quality Governance

With the DataOps approach, you get a big jump start with the data quality program, because the development and operations teams are already integrated and work together.

|

|

The operations and business users need to extend the iCEDQ platform’s capabilities for Data Quality Governance in production.

Create a Taxonomy of Business Process: Any enterprise creates a large amount of data belonging to different business processes and functions. These business processes rely on underlying data. This relationship should be documented. The next step is to identify the business-critical functions and compliance regulations and the underlying data elements required for that function. The quality of underlying data will define the success or failure of the data quality program.

|

Create Data Quality Rules in Production & Tag Business Processes: There are many factors that ultimately impact the quality of a data element.

- Data sources

- Changes in business rules

- The data process and the transformation the data

- The timing or the order of execution

All the above factors are dynamic and not in control of the operations team. They need to continually observe data, changes in business, and create new audit rules. iCEDQ provides the business rules approach to add data quality rules dynamically. It supports DQ rules based on compliance, validation, or reconciliation of data.

11. Establish Data Quality Issue Resolution Workflows

Once the business process and data elements are identified, a RACI matrix of Data Stewards and business processes is required. When a specific issue is identified with a data element, alerts and notifications should be done to the appropriate person. iCEDQ should provide the data exception report and pinpoint the data problem to specific records and attributes.

| R – Who is responsible? A – Who is accountable? C – Who should be consulted? I – Who should be informed? |

|

For data quality issue management, iCEDQ integrates with workflow tools like JIRA, ServiceNow, etc. All the events happening in iCEDQ are propagated via the Webhook Plugins.

12. Establish Data Quality Dashboards

While operational needs are satisfied with the Workflow tools, management needs insights into the status of data quality, trends, data quality metrics such as Six Sigma. These Data Quality Dashboards can be built in iCEDQ or with other reporting tools based on two types of data points:

- Static Metadata: Subject area, data element, data steward, Business process, compliance regulation, data quality rule, etc.

- Data Quality Execution Results: Date & time of execution, Records processed, the magnitude of data error, etc.

|

Conclusion

One of the direct impacts of DataOps is the improvement of data quality for the data pipeline. There are three core reasons for this:

1. Cultural Changes

|

2. Automation of Testing

- DataOps results in test automation, which can improve productivity by 70% over manual testing.

- Now that that the tests are automated, the test coverage can improve by more than 200%. Some tests are time-consuming if done manually; however, with DataOps automation, there are no such limitations. There can now be an increase in both the number of tests and the complexity of the criteria that can be run.

- The cost of production monitoring and refactoring of code is reduced as more defects are captured early in the life cycle of the data pipeline.

- Test and monitoring automation also enable regression testing. The testing and monitoring rules are stored in the system and can be recalled as needed during the regression tests.

3. Production Data Quality Governance

- Tests created during development and QA in iCEDQ are reused in the Production environment to monitor the data.

- The automation of monitoring also removes the limits on the volume of data that can be scanned. Organizations can move from sampling data to big data without any issues. With its Big Data edition, the iCEDQ platform can monitor the production data without data volume constraints.

- The iCEDQ rules can be embedded in the ETL pipeline or run periodically with its built-in scheduler.

- iCEDQ notifies the workflow or ticketing systems whenever there is a data issue.

DataOps is all about reducing data organization siloes and automating the data engineering pipeline. CDOs, business users, data stewards are involved early in the data development life cycle. It forces an organization to automate all its processes, including testing. The data quality tasks are now implemented early in the project life cycle. This provides enormous benefits to the data development team and operations and business teams with data issues occurring in production environments.

- Faster Time-to-Market

- Improves Data Quality

- Lowers Cost Per Defect

Gartner has recognized Data Testing and Monitoring as an integral part of DataOps and named iCEDQ in their DataOps Market Guide.