Download Resources

- All

- Agile Testing

- BI Testing

- Data Integration

- Data Management

- Data Migration Testing

- Data Quality

- Data Testing

- Data Warehouse

- DataOps

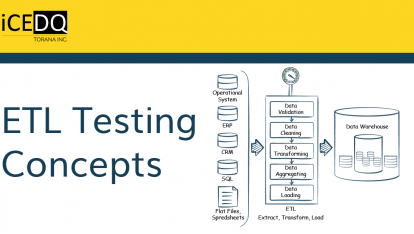

- ETL Process

- ETL Testing

- Quality Assurance

Introductions

What is iceDQ?

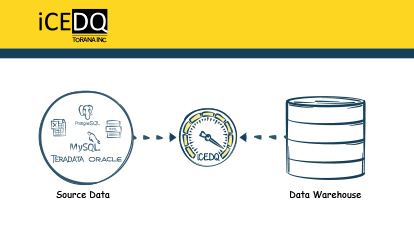

iceDQ is a DataOps testing and monitoring platform designed to identify data issues in or across any data source using our in-memory auditing rules engine.

What is the iceDQ full form?

iceDQ full form stands for Integrity Check Engine for Data Quality.

Where is iceDQ Used?

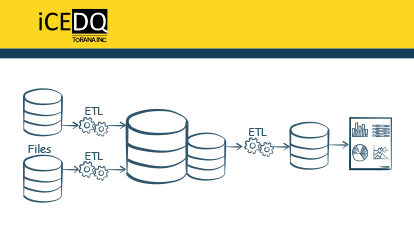

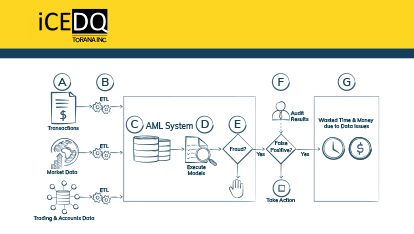

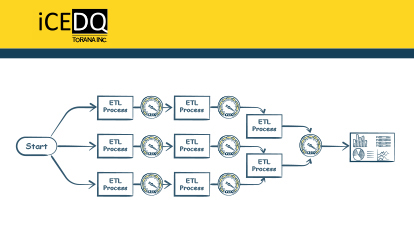

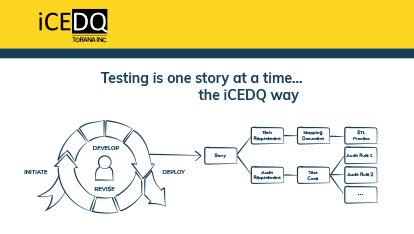

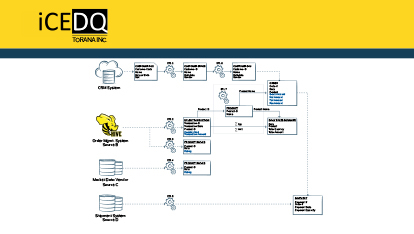

Organizations use iceDQ in the development phase for testing ETL, Data Warehouse, Data Migrations, and BI Reports. It is also used in production environments to proactively monitor data issues.

What is DataOps?

DataOps is the application of DevOps principals like CICD, Agile to data-centric projects.

What are the editions of iceDQ?

Currently, iceDQ has three different editions; Standard Edition, High Throughput Edition and Big Data Edition.

When was iceDQ launched?

The first version of iceDQ was made available in 2008. Since then, we have had multiple releases with newer functionalities and have acquired many enterprise customers.

As of 3/1/2020 the current GA version is 16.x

Who are your customers?

We are proud to have some of the big fortune 500 companies as our customers. Organizations from different verticals such as Finance, Healthcare, Retail, Manufacturing, Insurance, and Travel use iceDQ in production and non-production environments.

What are the use cases for iceDQ?

Below are some of the use cases of iceDQ.

- Big Data Testing

- Data Migration Testing

- ETL Testing/Data Warehouse Testing

- BI Report Testing

- Production Monitoring

- Data Compliance

How does it scale for the big data?

For Big Data you can use iceDQ’s Big Data Edition where every Rule or Regression Pack is evaluated using Apache Spark cluster. User can scale the performance by scaling the Spark cluster.

How to schedule a demo for iceDQ?

You can schedule a demo by registering here for the same.

What is the learning and implementation curve for iceDQ?

It is very short. Once the users go through a one or two-day training program, it takes them two weeks to completely implement iceDQ in their environment.

Do you support Cloudera for Big Data Edition?

Yes, currently, we support Cloudera, Hortonworks, and soon will be releasing support for AWS EMR. We have plans to support Databricks too.

Unique Selling Point

What makes iceDQ different?

The key differentiator is our in-memory rules engine(s). iceDQ validates data in the server memory allowing users to test a high volume of data efficiently. iceDQ also offers an engine based on Apache Spark, which enables users to scale testing of billions of rows on their Spark cluster.

Customer support is also excellent. If you don’t believe it, then sign up for a trial.

What is the difference between iceDQ vs. other ETL Testing Tools?

While there are many differences between iceDQ and other ETL Testing Tools, given below are a few that stand out the most.

- iceDQ evaluates data in memory; it does not load it into any database.

- It supports four different types of rules, Recon, Validation, Checksum, and Script.

- Users can use SQL + Apache Groovy to create advanced transformation checks.

- Users can write Script rules using Apache Groovy or Java.

- Governance/ Compliance Users for Governing or Monitoring Production systems

- It is a server-based platform and does not require any client installations

- An organization can create custom dashboards and reports using its enterprise reporting tool

You can also read our article “iceDQ Platform vs Data Quality Tools“

How does the script rule differ from other tools?

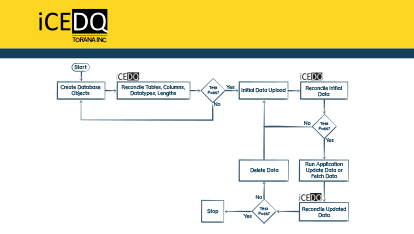

The Script Rule enables a user to automate the testing end to end. Users can write any custom Apache Groovy script or a Java class and execute it from iceDQ. Below are some of the real-world use cases of Script Rule:

- Create tables and insert expected data for testing.

- Read a dynamic parameter from a database and pass it to a Rule.

- Take a backup of the table and compare it with the actual table post ETL.

Using iceDQ

During which phase of the project can I used iceDQ?

You can use iceDQ in

- Development phase for Quality Assurance/ Testing

- Production phase for Data Monitoring/ Compliance

Who should be interested in iceDQ?

Following groups of users should be interested in iceDQ

- Developers for UNIT Testing

- QA Users for Functional Testing, Non-Functional Testing, Regression Testing

- QA Managers for Release Management, Sign Off

- Data Architects, DW Managers to monitor the progress of the project

- Governance/ Compliance Users for Governing or Monitoring the Production system

What amount of data can be compared using iceDQ?

You can compare millions of rows with iceDQ. Depending upon the type of audit rule created, iceDQ can process anywhere between 10k – 20k rows/ sec.

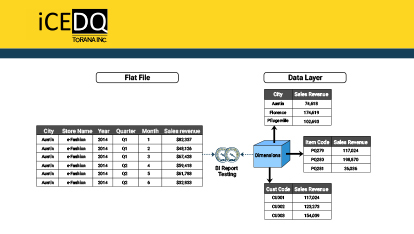

Can iceDQ compare a File with a database?

Yes, it can. iceDQ supports Flat Files, Excel, XML, JSON, Parquet, and other file formats.

Does it use a database to compare?

No, iceDQ does not use a database to compare or validate data. It has an in-memory rules engine purely built in Java and Spark.

Architecture

Does iceDQ run on my desktop or server?

The software runs on a server where all the data processing happens. Users can access it through the web app; no client installations needed.

Does it use a database to compare?

No, iceDQ does not use a database to compare or validate data. It has an in-memory rules engine purely built in Java.

Does it need a lot of memory?

Not at all. The engine pulls data into memory, in chunks, evaluates it, and keeps on doing that till the complete data set is validated.

How can I scale iceDQ?

There are multiple ways to scale iceDQ based on the requirement. Different options are available:

- Adding more CPU will allow you to run more Rules in parallel.

- Using our HA Cluster will enable you to install the engine and app on multiple servers. This will make the app Highly available and increase the number of CPUs.

- Using High Throughput Edition will allow you to run a Rule on multiple cores, in turn, improving the performance of an individual Rule.

- Big Data Edition will enable the user to scale the performance of an individual Rule based on the size of the cluster.

Do you expose any iceDQ API?

We do expose an API to execute Rules from any external tool for integration. Users can override connections and parameters on runtime using the API allowing them to reuse the Rules.

Can Rules be migrated to across instances of iceDQ?

Yes. Users can export an individual Rule, a batch of Rules, or a complete project into an export file. And then import it in any instance of iceDQ.

iceDQ Installation

What is the installation requirement?

Below are the requirements for installing iceDQ.

- Windows or Linux Server

- Java JDK 1.8 or above

- Database Schema (Oracle/ MSSQL/ Postgres)

You can take a look at the pre-installation checklist here.

How much memory should I allocate to the iceDQ server?

We recommend a minimum of 16 GB. You can increase it based on your workload and usage.

How much time does it take to install iceDQ?

It takes 30-45 mins to install and get iceDQ up and running.

How complicated is the iceDQ upgrade?

It is a straightforward, in-place upgrade. We recommend taking a backup of the database repository before the update.

Can I install iceDQ on a cloud?

Yes, you can install iceDQ on any cloud platform. Customers have installed this on AWS, Azure, and GCP.

Can I connect on-prem data sources from cloud and via-a-vis?

Yes, as long as your VPN allows you to do so. We do not put any restrictions on where you can install and what you can connect too.

What cloud data sources can I connect using iceDQ?

You can connect to Snowflake, Redshift, S3, and many others. Find the complete list here.

Connectivity

How does iceDQ connect to a data source?

It uses JDBC or JDBC-ODBC bridge to connect to various data sources. Sometimes we built custom connectors using the data source SDK in Java.

What are the databases iceDQ can connect too?

It can connect to any JDBC compliant database like SQL Server, Oracle, Postgres SQL, etc. You can look at the complete list of database connectors here.

What are the different file formats supported by iceDQ?

Users can read data from flat files (delimited or fixed-width), XML, JSON, Excel. You can check out the complete list of supported file types here.

Users can read data from flat files (delimited or fixed-width), XML, JSON, Excel. You can check out the complete list of supported file types here.

Does iceDQ support XML?

Yes, iceDQ allows the user to write SQL against an XML file.

Does iceDQ support JSON?

Yes, iceDQ allows the user to write SQL against a JSON file.

What if the connector I want is not available in iceDQ?

You can raise a feature request on our support portal or reach out to our sales team to get more information.

What BI Reporting Tools does iceDQ support for testing?

Currently, we support the SAP, BO, Webi, and Tableau. Our roadmap for BI Report connectors is driven by customer request. Next, we are going to release the connector for Microstrategy.

What Big Data systems does iceDQ support?

It can connect to Hive, Impala, Cassandra, and many other data sources in your ecosystem. You can find the complete list here.

Can I compare PDF files using iceDQ?

There is no direct support for PDF files, but customers have converted it into XML and used our connector for XML to compare the data.

What cloud Data Warehouses can you connect too?

You can connect to Snowflake, Redshift, Azure Synapse, and a few others. You can find the complete list here.

Does iceDQ have access to our data?

No. iceDQ is a software that is entirely managed by the customer with the help of our support team. It does not communicate with external systems, and our teams do not have access to your instance of iceDQ.

Integration

We have HPQC and want to see all the results in iceDQ. Can we do that with iceDQ?

Yes, iceDQ comes with an outbox integration with HP ALM 12.5 or above. You can import the Requirement and Test Cases from HP ALM and associate the audit rules. After the execution results will be posted back in HP ALM.

I want to integrate iceDQ with Informatica. Is it possible?

Yes, it can be integrated with ETL Tool out there in the market through command line or web services.

Can it also be integrated with Control-M or Autosys?

Definitely, you can achieve that using the command line or web service interface of iceDQ.

What are some of the out of box integrations?

We have Rest API’s and a CLI which customers use to trigger test from any external Scheduling, ETL, Workflow, or Scripting tool.

Currently, we have out of the box integrations with HP ALM, JIRA, and Jenkins. Customers can also build their own integrations with tools like Service Now, Slack, Elastic Search through our Integration Hub module.

Can I call iceDQ API from other tools?

Yes, you can. We have Rest API to trigger Rules and Regression packs from any external tool.

Will I be able to integrate iceDQ with a CI/CD tool?

Absolutely!!! Customers have integrated with Jenkins, Bamboo, and other CI/ CD tools using our APIs, CLI, or plugins.

What test case management tools can I integrate with iceDQ?

You can integrate with HP ALM, X-Ray. And we are planning to release support for QTest, TFS, and a few other test case management tool.

Collaboration

Do I receive certain kind of alert or notification if a test fails?

Yes, iceDQ will send email alerts on success or failure of rule depending upon how it is configured.

iceDQ Security

Do you support RBAC?

Yes, we do. Customers can create users, groups, and give different types of grants on connections, projects, and other entities.

Can iceDQ integrate with LDAP?

Yes. Customers can support LDAP users as well as local application users at the same time.

How does iceDQ ensure database access security?

iceDQ supports two types of connections, generic connections that can use service accounts and user connections in which each user has to provide their credentials. User connection offers an added level of security as other users are not using credentials.

iceDQ Reporting & Dashboard

Does iceDQ have any built-in reports?

Yes, iceDQ provides a few pre-packaged dashboards in its reporting and dashboard module.

Can I create custom reports and dashboards?

Yes, you can. We have reporting and dashboard module which allows users to generate any report and dashboards using available templates.

How does iceDQ report test failures?

It sends email alerts on the success and failures of the Rules. The email includes execution details as well as the ability to download the data issues in an excel format. The Rules are configured to send email alerts to users, groups, or distribution lists.

iceDQ Trial

Do you offer a free iceDQ trial?

Yes, we give a free 30-day trial, where you will have access to the complete iceDQ software and support. First we will schedule a demo of the product and answer any questions you have. This ensures that your requirements can be satisfied by iceDQ.

Request for 30 Day Free Trial.

Do you provide training during the trial period?

As part of the trial/ POC, we do a 1.5-hour training session at no cost. We also schedule a weekly standing call to help you with any questions.

We also provide access to our support portal.

What do I need to install iceDQ?

Download iceDQ pre-installation checklist here.

What are the features disabled in the iceDQ trial?

We provide a most recent GA full version iceDQ software for a trial. Nothing is disabled.

Where can I install iceDQ?

You can install it on-prem or in your private cloud (AWS/ Azure/ GCP).

If I have any questions or issues during my trial, what is the best way to get an answer?

You can raise a ticket on the iceDQ support portal. We will also assign a customer success manager to you as part of the trial, who will be responsible for coordinating everything.

Support

What support channels do you offer?

We provide email, phone, and WebEx support. In some instances, we offer onsite support too.

Do you provide 24x7 support?

Yes, we provide 24×7 support via email, phone, or WebEx.

How can I open a ticket?

As part of onboarding, we provide access to our support portal. Users can raise tickets there or send an email to support.

Do you have an online knowledgebase?

Yes, we do. Once you get access to our support portal, you will get access to the KB too.

Training

Do you have any iceDQ training programs?

Yes, we provide instructor-led training classes that are either online or in person.

Do you offer a formal certification for iceDQ?

Yes, you will receive a certificate as an “iceDQ Certified Developer” upon the completion of your training program. It is valid for a calendar year, and a refresher course will be required if there are changes in the software.

Do you have an online course?

Yes, we offer an instructor-led online training program. Each class is interactive and offers hands-on exercises. We will soon be launching a self-paced online training program.

Do you provide a group discount for the iceDQ training?

Yes, we do. Please contact us for more details.

Licensing

How does iceDQ's licensing work?

We offer different licensing options for customers to choose from based on requirements.

- Server license (Annual Subscription)

Deploy iceDQ on a Server with no restriction on the number of users.

- Enterprise license (Unlimited deployments)

Customers can deploy unlimited instances of iceDQ products across the organization.

Is there any limit on the number of users in the Server license?

No, Unlimited users can log in and use iceDQ.

Do you offer subscription licensing?

Yes, we do offer a subscription license for iceDQ.

Are there any additional licensing costs?

We offer custom connectors add-on for certain types of files and cloud systems and also load balancer add-on. Every add-on is charged separately on a la cart basis.

Contact us for more information.

Do you offer special pricing for non-profit organizations?

Yes, we do. Please reach out to us for more details.

Services

Do you offer any services to get us started quickly?

Yes, we offer a Jumpstart program, Data Testing as a Service (DTaaS), Data Migration Testing Service, and also resources who are experts in Data Testing and iceDQ.

What does the Jumpstart program entail?

Along with the installation, configuration, and training, additional work of actually implementing test rules and mentoring for live projects.

iceDQ resources will be collecting requirements and implementing audit rules and sharing experience with client resources. Eventually, the customer takes over the activities as they will have a complete grasp of the usage because of ongoing mentoring.

What is Data Testing as a Service?

Many organizations want to focus on their core activities and would like someone else manages their data testing and monitoring needs. In such cases, simply hiring an external resource does not work. Because the external resource is responsible for tasks but not the failure or success of the project, that risk is still with the customer.

iceDQ specializes in data related testing and monitoring and offers “Data Testing as a Service” wherein the iceDQ team ultimately manages the resources and testing activities.

The number of resources and their locations will be planned together with the client. Based on contract resource could be onsite, Nearshore/Offsite or Offshore depending on the options selected by the customer.

Timeframe: Annual /Project

What is Data Migration Testing Service?

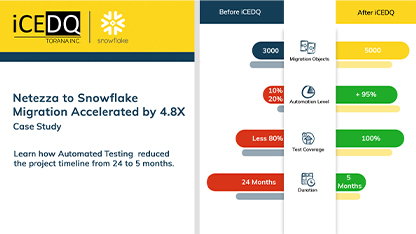

A lot of organizations are moving to cloud platforms like Snowflake, Redshift, Azure DW, and many other similar platforms. These Data Migration projects have short project timelines, and that is why customers need help with accelerating the testing of it.

This is where the iceDQ team comes in and offers its expertise on how to do Data Migration testing. We can completely manage the Data Migration Testing or provide resources that are experts in iceDQ and testing.

We have successfully helped customers migrate their on-prem databases to Redshift and Snowflake.

Please reach out to us for further details.Do you have short or long term services?

Yes, we do. We offer services around Data Testing, ETL, Data Modeling, BI Analytics.

Can iceDQ help me accelerate testing for Cloud Data Warehouse Migration?

Yes, our services team has built tools and processes to help organizations test their migrations quickly and effectively.

Scheduling

Can I schedule test runs in iceDQ?

Yes, you can. We have an inbuilt scheduling application. But you can also use any external scheduling tools like airflow, Control-M, or Tiday for executing iceDQ jobs.

News